You ask your favorite chatbot about persistent headaches, and it suggests a supplement stack. Sounds helpful, right? But what if that advice ignores a medication you’re taking or misses signs of something serious?

AI tools have become go-to sources for health questions. They’re fast, accessible, and don’t judge. But they also carry real dangers that most people don’t spot until it’s too late.

AI-generated wellness advice often lacks personalization, medical context, and accountability. These tools can’t access your health records, understand complex interactions between conditions, or take legal responsibility for harm. Learning to spot warning signs like overly generic responses, missing disclaimers, and confident claims without sources helps you use AI safely while knowing when to consult actual [healthcare providers](https://www.who.int/) instead.

Why AI Gets Health Advice Wrong

Artificial intelligence learns from massive datasets scraped from the internet. That means it absorbs both accurate medical information and complete nonsense in equal measure.

The system can’t distinguish between a peer-reviewed study and a random blog post. It weights information based on patterns, not truth.

This creates a fundamental problem. AI might confidently recommend treatments that worked for thousands of people in its training data while completely missing why those treatments would be dangerous for you specifically.

Your unique medical history, current medications, allergies, and underlying conditions don’t exist in the AI’s knowledge base. It’s giving advice to a generic human, not to you.

Even worse, these systems occasionally “hallucinate” information that sounds authoritative but is completely fabricated. They might cite studies that don’t exist or describe treatment protocols no medical professional would recognize.

Red Flags That Signal Unreliable Advice

Certain patterns appear consistently in problematic AI health recommendations. Learning to spot them takes practice but becomes second nature.

Watch for these warning signs:

- Advice that sounds oddly confident about complex medical questions

- Recommendations that don’t ask about your current medications or conditions

- Suggestions to avoid seeing a doctor for concerning symptoms

- Claims about “miracle cures” or treatments that work for everyone

- Missing citations or vague references to “studies show”

- Contradictory information within the same response

- Advice that conflicts with established medical guidelines

Generic responses present another major risk. If the AI gives you the same basic answer it would give anyone asking about back pain, knee problems, or digestive issues, it’s not actually helping you.

Real medical advice requires personalization. A healthcare provider asks follow-up questions, considers your history, and tailors recommendations to your situation.

AI can’t do that without access to information it doesn’t have.

Common Categories Where AI Fails Hardest

Some health topics prove especially problematic for artificial intelligence systems. Understanding these vulnerable areas helps you know when to be extra cautious.

Mental Health Treatment

AI chatbots frequently suggest therapy techniques or coping strategies that sound reasonable on the surface. But mental health treatment requires nuanced understanding of individual trauma, brain chemistry, and psychological patterns.

A suggestion that helps one person might trigger a crisis in another. AI can’t assess suicide risk, recognize warning signs of serious conditions, or provide the human connection that makes therapy effective.

Medication Interactions

This represents one of the most dangerous areas for AI advice. Drug interactions depend on dozens of factors including dosage, timing, individual metabolism, and other medications.

AI might know that Drug A and Drug B can interact. But it doesn’t know you take Drug C, which changes everything. It can’t access pharmacy records or understand how your liver processes medications differently than average.

Symptom Diagnosis

People love asking AI what their symptoms mean. The problem? Hundreds of conditions share similar symptoms.

Fatigue could signal anything from iron deficiency to thyroid problems to serious illness. AI tends to suggest common causes while missing rare but critical conditions that need immediate attention.

Supplement Recommendations

The wellness industry loves supplements, and AI has absorbed countless articles promoting them. But supplements can cause serious harm when combined with medications or certain health conditions.

AI rarely warns about contraindications or asks the right questions before suggesting you add something new to your routine.

How AI Health Advice Differs From Medical Care

Understanding what AI actually does versus what doctors do clarifies why the risks matter so much.

| What AI Provides | What Medical Care Provides |

|---|---|

| Pattern-based suggestions from internet data | Diagnosis based on examination and tests |

| Generic advice for common scenarios | Personalized treatment plans |

| Information without legal accountability | Licensed professionals who can be held responsible |

| Responses that can’t adapt to new information | Ongoing monitoring and adjustment |

| No access to your medical history | Complete context of your health journey |

| No ability to order tests or prescribe | Authority to provide comprehensive treatment |

This table isn’t meant to dismiss AI entirely. These tools have legitimate uses for general health education and understanding basic concepts.

But they can’t replace the judgment, experience, and accountability that come with actual medical care.

The Personalization Problem

Here’s a real scenario that illustrates the core issue.

Two people ask an AI chatbot about joint pain. Person A is a 28-year-old runner with no health conditions. Person B is a 52-year-old with diabetes, high blood pressure, and a family history of autoimmune disease.

The AI might give both people similar advice about rest, ice, and anti-inflammatory medications. For Person A, this could be perfectly fine. For Person B, it might mask symptoms of a serious condition that needs immediate attention.

The AI doesn’t know to ask about age, medical history, medication list, or family history. It responds to the question asked without gathering the context needed for safe recommendations.

This creates a false sense of personalization. The response feels tailored because it addresses your specific question. But it’s actually dangerously generic.

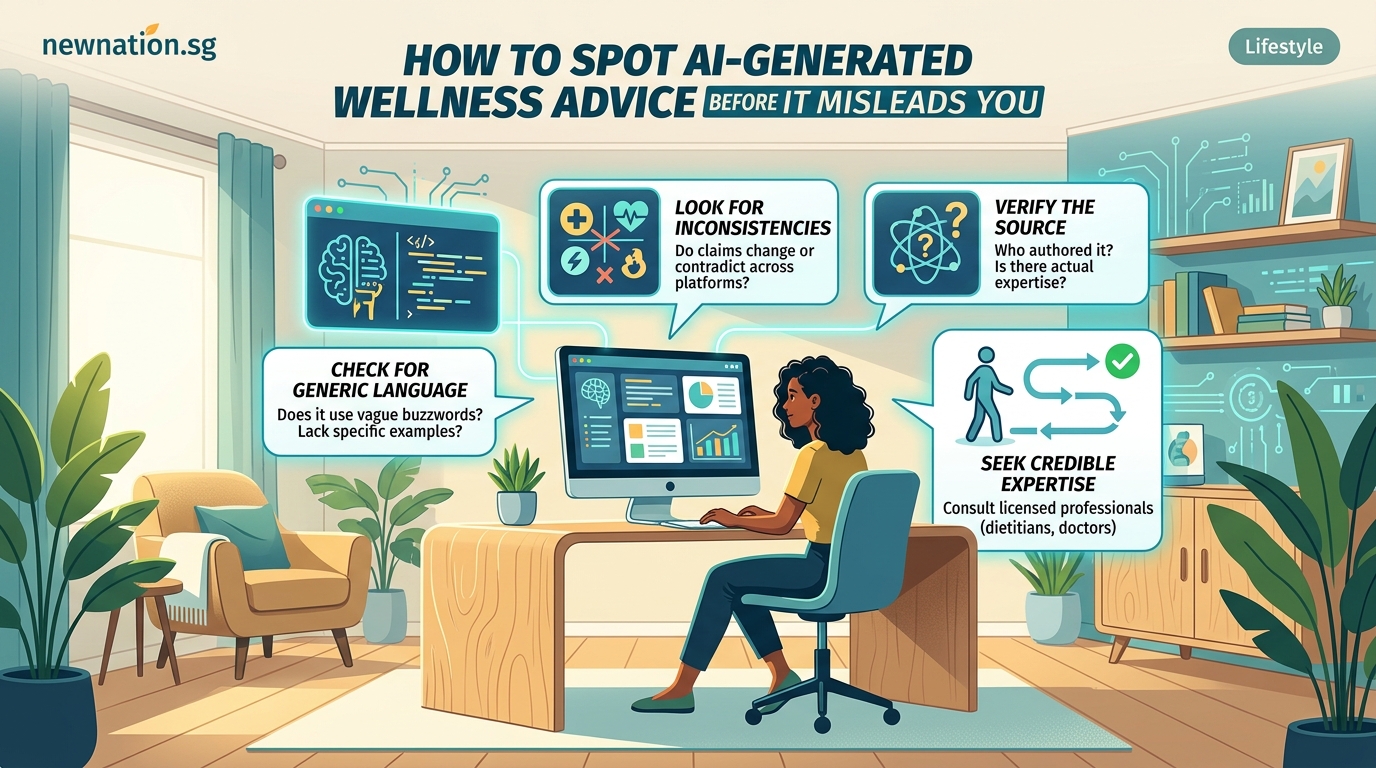

Steps to Verify AI Health Information

You can still use AI tools for health questions if you approach them correctly. The key is verification and context.

- Treat AI responses as starting points for research, never as final answers.

- Cross-reference any specific claims with reputable medical websites like Mayo Clinic or Cleveland Clinic.

- Note whether the AI provides sources and actually check those sources yourself.

- Run important recommendations past your actual healthcare provider before acting on them.

- Pay attention to what the AI doesn’t ask about rather than just what it tells you.

- Look for medical disclaimers and take them seriously when they appear.

This process takes more time than just following AI advice directly. That’s the point.

Health decisions deserve careful consideration, not convenience.

When AI Advice Becomes Actively Dangerous

Some situations move beyond “not ideal” into genuinely risky territory.

Never rely on AI for chest pain, severe headaches, sudden vision changes, difficulty breathing, or any symptom that feels like an emergency. These require immediate medical attention, not internet research.

Don’t use AI to adjust medication dosages, even if the suggestion sounds reasonable. Dosing depends on factors AI can’t assess.

Avoid letting AI talk you out of seeing a doctor. If something feels wrong enough that you’re asking about it, get it checked by a professional.

Be especially wary of AI advice about children’s health. Kids aren’t just small adults. They have different physiology, and symptoms present differently. Pediatric care requires specialized knowledge.

Pregnancy and breastfeeding represent another high-risk category. What’s safe for most people might be harmful during pregnancy, and AI often lacks the nuanced information needed for these situations.

“AI can be a useful tool for understanding general health concepts, but it should never replace professional medical advice. The stakes are too high, and the technology isn’t designed to handle the complexity of individual human health.” – Dr. Sarah Chen, Digital Health Ethics Researcher

What Responsible AI Health Tools Look Like

Not all AI health applications carry equal risk. Some are designed more carefully than others.

Better tools clearly state their limitations upfront. They remind users they’re not providing medical advice and encourage consultation with healthcare providers.

They ask qualifying questions before making suggestions. A well-designed tool inquires about medications, allergies, and existing conditions.

They provide sources for their recommendations. You should be able to trace advice back to legitimate medical research or guidelines.

They know when to escalate. Good health AI recognizes red flag symptoms and directs users to seek immediate care.

They avoid making definitive diagnoses. Instead of saying “you have condition X,” they might say “these symptoms could indicate several conditions, including X, Y, and Z. A healthcare provider can determine which through proper testing.”

The Accountability Gap

This issue doesn’t get discussed enough. When AI gives you bad health advice and you get hurt, who’s responsible?

The company that made the AI will point to terms of service that disclaim medical liability. The AI itself can’t be sued or lose a medical license because it doesn’t have one.

Your doctor, on the other hand, carries malpractice insurance and can face serious consequences for negligent advice.

This accountability gap means you bear all the risk when following AI health recommendations. There’s no safety net, no recourse, no professional standing behind the information.

That reality should inform how much trust you place in these tools.

Building a Safer Approach

Using AI for health information doesn’t have to be all or nothing. A balanced approach lets you benefit from the technology while protecting yourself from its limitations.

Start with the assumption that AI responses need verification. This mental shift prevents you from treating chatbot advice as authoritative.

Use AI for understanding medical terminology, learning about general health concepts, or preparing questions for your doctor. These applications play to its strengths while avoiding its weaknesses.

Keep a running list of medications, supplements, and health conditions to reference when evaluating any health advice, AI-generated or otherwise. This helps you spot potential problems.

Develop a relationship with a primary care provider who knows your history. This gives you a trusted source to consult when you’re unsure about information you’ve found.

Learn to recognize the difference between health education and medical advice. Education helps you understand how the body works. Advice tells you what to do about your specific situation.

AI can be decent at education. It’s terrible at advice.

Protecting Yourself and Others

Your own health literacy helps, but think about people in your life who might not recognize these risks.

Older relatives might not understand that chatbots aren’t actual doctors. Kids and teenagers treat AI like an all-knowing friend. People with health anxiety might latch onto AI-generated worst-case scenarios.

Having conversations about AI health advice risks with family members creates a safety buffer. Share what you’ve learned about limitations and red flags.

When you see someone posting about following AI health recommendations on social media, consider gently sharing information about verification and professional consultation.

This isn’t about being the health police. It’s about recognizing that AI tools are powerful, widely accessible, and poorly understood by most users.

Making AI Work for You Safely

The technology isn’t going anywhere. AI tools will only become more sophisticated and more integrated into how we access health information.

That makes learning to use them safely an essential skill, not an optional one.

Think of AI as a research assistant with significant limitations rather than a medical expert. Use it to gather information, not to make decisions.

Always maintain the final decision-making authority yourself, in consultation with qualified healthcare providers when needed.

Pay attention to how different AI tools handle health questions. Some are more responsible than others, and that matters.

Stay skeptical of confident-sounding responses. The most dangerous AI advice often sounds the most authoritative.

Remember that your health is too important for shortcuts. The few minutes saved by accepting AI advice without verification aren’t worth the potential consequences.

These tools can enhance your health literacy and help you have more informed conversations with healthcare providers. That’s valuable.

But they can’t replace the expertise, judgment, and accountability that come with actual medical care. Understanding that distinction keeps you safe while still benefiting from what AI does well.

Your health decisions deserve the same careful thought you’d give any important choice. AI can inform that process, but it shouldn’t control it.