You scroll through your feed and see a shocking image. A friend shared it. Then another. Within hours, it’s everywhere. The problem? It’s completely false.

This happens thousands of times every day across social platforms. False information moves faster than truth, reaching more people and causing real damage before anyone can stop it.

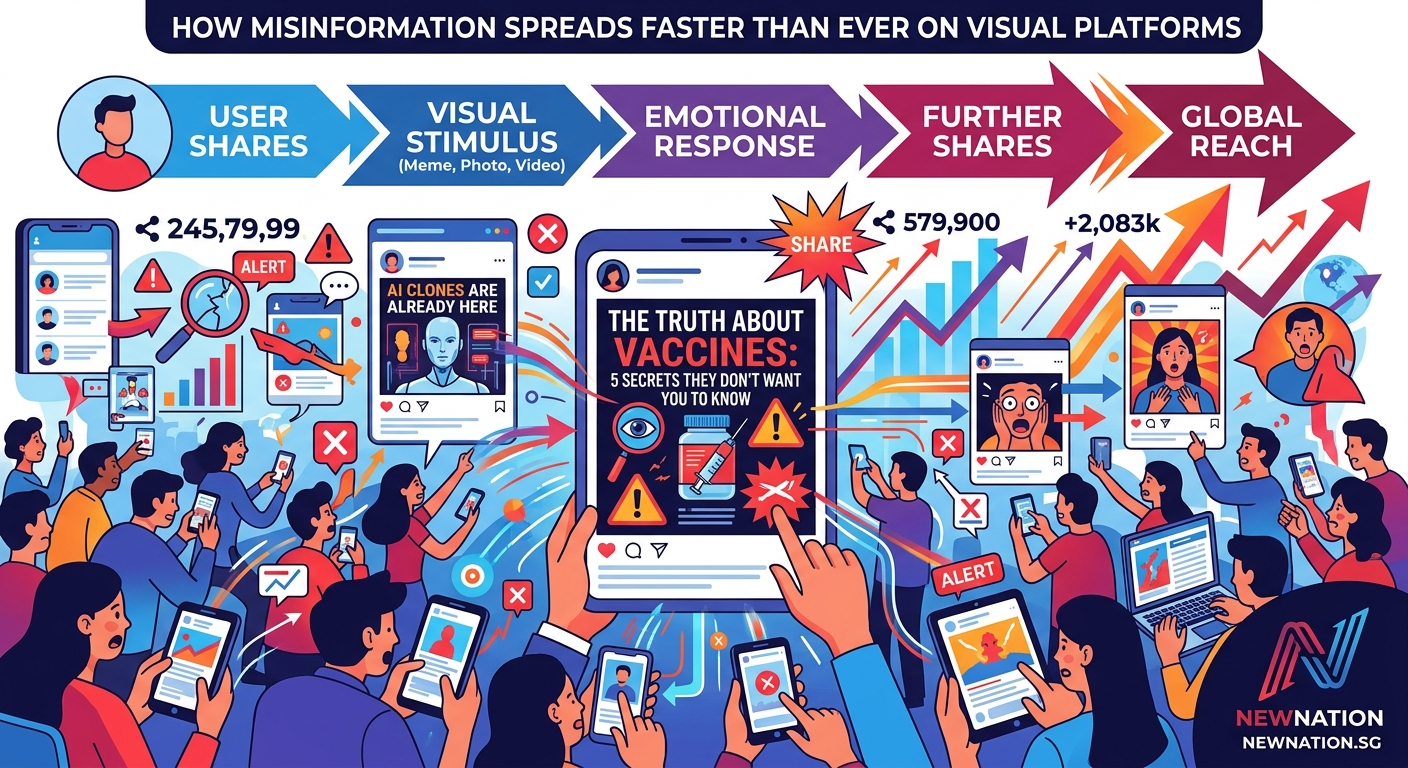

Misinformation spreads rapidly on social media through a combination of platform algorithms, visual content appeal, emotional triggers, and human sharing behavior. Visual platforms amplify false information because images and videos require less cognitive effort to process than text, making them more shareable. Understanding these mechanisms helps educators, researchers, and journalists combat the spread of falsehoods and protect information integrity.

Why false information travels faster than facts

Research from MIT showed that false news spreads six times faster than accurate news on social platforms. The reason isn’t mysterious.

Lies are often more interesting than truth. They trigger stronger emotions. They promise scandal, danger, or conspiracy. Truth tends to be boring by comparison.

Platform algorithms don’t distinguish between true and false content. They only measure engagement. A sensational lie that gets shares, comments, and reactions will reach far more people than an accurate but mundane fact.

The math works against truth. When misinformation generates more engagement, algorithms reward it with greater reach. This creates a feedback loop that accelerates false information while suppressing corrections.

The visual advantage that amplifies falsehoods

Images and videos spread faster than text-based content. Your brain processes visual information 60,000 times faster than text.

This creates a perfect storm for misinformation. A doctored photo or misleading video can convey a false narrative instantly. No reading required. No fact-checking pause.

Visual platforms like Instagram, TikTok, and Facebook prioritize this content. Their entire design encourages rapid scrolling and instant reactions. Users make split-second decisions about sharing based on emotional response, not accuracy.

Deepfakes and AI-generated images have made this worse. Technology now allows anyone to create convincing fake photos and videos. The barrier to producing sophisticated misinformation has collapsed.

How platform algorithms fuel the fire

Social media algorithms optimize for one thing: keeping you on the platform. They show you content that generates strong reactions.

Misinformation often generates stronger reactions than accurate information. It makes people angry, scared, or outraged. These emotions drive engagement.

Here’s how the algorithm cycle works:

- Someone posts false information with emotional appeal

- Early viewers react strongly and share it

- The algorithm detects high engagement and pushes the content to more users

- Each new wave of viewers repeats the pattern

- The content goes viral before fact-checkers can respond

The algorithm doesn’t care about truth. It cares about time spent on platform and ad revenue. Misinformation often performs better on both metrics.

The psychology behind why people share falsehoods

Most people who spread misinformation don’t know it’s false. They genuinely believe they’re sharing important information.

Several psychological factors drive this behavior:

- Confirmation bias makes people accept information that matches existing beliefs

- Social identity motivates sharing content that strengthens group membership

- Emotional arousal reduces critical thinking and increases impulsive sharing

- The illusion of knowledge makes people overestimate their ability to spot falsehoods

- Authority bias leads people to trust content from sources they perceive as credible

When your uncle shares a fake news article, he’s not trying to deceive anyone. He saw something that confirmed what he already believed, felt an emotional response, and shared it to warn others or express solidarity with his group.

The platform made sharing easy. One tap. No friction. No warning. No requirement to read the full article or verify the source.

The role of influencers and verified accounts

Verified accounts and influencers amplify misinformation exponentially. When someone with 100,000 followers shares false information, it reaches a massive audience instantly.

The verification checkmark creates false authority. People assume verified accounts are trustworthy. They’re not. Verification only confirms identity, not accuracy.

Influencers often share content without verification because their business model rewards speed and engagement. Being first matters more than being right. A viral post generates revenue even if it’s later debunked.

Some influencers knowingly spread misinformation because it drives engagement. Controversy and outrage build audiences faster than careful, accurate reporting.

Common techniques that make misinformation spread

False information uses predictable patterns to maximize spread. Understanding these techniques helps you spot them.

| Technique | How It Works | Why It Spreads |

|---|---|---|

| Emotional manipulation | Uses fear, anger, or outrage to trigger sharing | People share content that makes them feel strongly |

| False urgency | Claims immediate action needed | Creates pressure to share before thinking |

| Out-of-context visuals | Uses real images with false captions | Images provide false credibility |

| Authority mimicry | Imitates news outlets or experts | Bypasses skepticism through borrowed trust |

| Partial truths | Mixes facts with falsehoods | Makes the entire claim seem credible |

| Us vs. them framing | Creates tribal divisions | People share to signal group loyalty |

These techniques exploit how your brain makes decisions under time pressure and emotional stress. Social platforms are designed to keep you in exactly that state.

The speed problem that favors falsehoods

Misinformation spreads in minutes. Fact-checking takes hours or days. This timing gap is critical.

By the time fact-checkers debunk a false claim, it has already reached millions of people. The correction reaches a fraction of the original audience.

People also remember the initial false claim more strongly than the correction. This is called the continued influence effect. Even after learning something is false, people continue to believe and act on it.

Social platforms have tried to address this with warning labels and reduced distribution for flagged content. These measures help but arrive too late to prevent initial spread.

The fundamental problem remains: verification is slow, sharing is instant.

Why visual platforms accelerate the problem

TikTok, Instagram, and YouTube Shorts present unique challenges. Their format favors emotional, visual content consumed rapidly.

Users scroll through dozens of videos per minute. There’s no time for critical evaluation. The next video starts automatically. The platform rewards constant motion and novelty.

Misinformation on these platforms often takes the form of:

- Misleading video edits that remove context

- Fake expert testimonials filmed to look credible

- Staged scenarios presented as real events

- AI-generated voices reading false information

- Conspiracy theory compilations set to dramatic music

The comment sections often contain corrections, but most users never read them. They watch, react, and scroll.

The echo chamber effect that reinforces falsehoods

Algorithms show you more of what you engage with. If you watch one conspiracy video, you’ll see dozens more.

This creates information bubbles where false narratives become self-reinforcing. Everyone in your feed agrees. Dissenting voices are absent. The misinformation feels like consensus reality.

These echo chambers make correction nearly impossible. When someone finally encounters a fact-check, it feels like the outlier. The false narrative has been reinforced hundreds of times. The correction appears once.

Group polarization intensifies within these bubbles. Beliefs become more extreme as members reinforce each other. Moderate voices leave or stay silent. The loudest, most extreme voices dominate.

How bots and coordinated campaigns multiply reach

Not all sharing is organic. Coordinated campaigns use bot networks to artificially amplify misinformation.

These operations work like this:

- Create dozens or hundreds of fake accounts

- Post the same false content across multiple accounts simultaneously

- Use bots to like, share, and comment on the posts

- Create the appearance of viral organic spread

- Real users see the apparent popularity and join the sharing

Platforms struggle to detect sophisticated bot networks. They mimic human behavior patterns. They post varied content. They interact with real users. They operate across time zones to appear authentic.

State actors, political campaigns, and commercial interests all use these techniques. The goal is to make false narratives appear popular and credible through artificial consensus.

“The most effective misinformation doesn’t look like propaganda. It looks like what your friends are sharing. It confirms what you already suspect. It makes you feel informed and engaged. That’s exactly what makes it dangerous.”

The correction challenge nobody has solved

Debunking misinformation is harder than spreading it. Corrections face multiple obstacles.

First, they reach fewer people. The algorithm doesn’t favor corrections because they generate less engagement. Anger spreads faster than calm clarification.

Second, corrections can backfire. The familiarity backfire effect means that repeating a false claim, even to debunk it, can strengthen belief in the original falsehood.

Third, corrections threaten identity. When misinformation aligns with group identity or worldview, corrections feel like personal attacks. People reject them to protect their sense of self.

Fourth, the effort gap is enormous. Creating convincing misinformation takes minutes. Thorough fact-checking requires research, expert consultation, and careful writing. The liar has a massive efficiency advantage.

What platforms could do but mostly don’t

Social media companies have tools to slow misinformation. They rarely use them aggressively because it conflicts with growth and engagement goals.

Effective measures would include:

- Friction before sharing, requiring users to read articles before posting

- Downranking content flagged by fact-checkers immediately, not after it goes viral

- Removing recommendation algorithms for news and political content

- Requiring verification for accounts that reach large audiences

- Transparent labeling of AI-generated or manipulated media

- Slower distribution for potentially false content until verified

These measures would reduce misinformation but also reduce engagement and time on platform. That means less advertising revenue. The business model conflicts with information accuracy.

Some platforms have implemented weak versions of these ideas. The results show they work when applied seriously. They’re rarely applied seriously.

Protecting yourself and your community

You can’t stop misinformation from existing, but you can reduce its impact on your circles.

Before sharing anything that triggers strong emotion, pause. Ask yourself:

- Does this confirm what I already believe too perfectly?

- Is the source credible and transparent about methods?

- Can I find this reported by multiple independent outlets?

- Does the image or video show what the caption claims?

- Am I sharing because it’s true or because it feels true?

When you see misinformation in your network, respond thoughtfully. Publicly shaming people who share falsehoods makes them defensive and less likely to change their minds.

Private messages work better. Share fact-checks gently. Acknowledge that the false information is convincing. Explain why you looked into it further.

Model good information hygiene. Share accurate sources. Admit when you’re uncertain. Correct your own mistakes publicly. This creates cultural pressure toward accuracy.

Teaching others to recognize manipulation

Educators and journalists have a special role in building information literacy. The skills needed to navigate modern media aren’t intuitive.

Young people need to learn:

- How algorithms shape what they see

- How to reverse image search to find original context

- How to identify emotional manipulation techniques

- How to evaluate source credibility

- How to sit with uncertainty instead of accepting convenient falsehoods

These skills aren’t taught in most schools. Media literacy education lags decades behind the information environment students actually inhabit.

Adults need these skills too. Many people over 40 grew up trusting published information. They haven’t adapted to an environment where anyone can publish anything that looks professional.

Workshops, articles, and public education campaigns can help. But they’re fighting against platforms optimized to exploit cognitive biases and emotional triggers.

When misinformation causes real harm

False information isn’t just annoying. It has body counts.

Medical misinformation has convinced people to reject effective treatments and embrace dangerous alternatives. Election misinformation has undermined democratic processes. Climate misinformation has delayed action on existential threats.

During health crises, viral falsehoods about treatments, vaccines, and transmission have directly led to preventable deaths. The people spreading this information usually believe they’re helping.

Financial scams spread through social media have cost victims billions. Fake investment opportunities, cryptocurrency schemes, and phishing attacks all use the same viral mechanics as other misinformation.

Reputational damage from false accusations can destroy lives. Doctored images and out-of-context videos have ended careers and relationships. The corrections never catch up to the initial spread.

Building systems that favor truth

Solving this problem requires changes at multiple levels. Individual behavior matters but isn’t sufficient.

Platform design must change. Algorithms that optimize purely for engagement will always favor misinformation. Alternative metrics that weight accuracy and information quality could help.

Regulation may be necessary. Platforms currently have no legal liability for the misinformation they amplify. This removes incentive to address the problem seriously.

Funding for fact-checking and investigative journalism needs to increase. The economics of online media favor cheap, fast content over expensive verification.

Education systems must prioritize media literacy as a core skill, not an afterthought. Understanding information systems is as fundamental as reading and math.

None of these changes will happen automatically. They require sustained pressure from users, advertisers, and regulators who prioritize information integrity over engagement metrics.

Making sense of our information environment

We live in an ecosystem designed to spread engaging content, not accurate content. Understanding this matters.

The platforms you use every day weren’t built to inform you. They were built to hold your attention and sell advertising. Misinformation often performs better than truth on those metrics.

This doesn’t mean social media is purely harmful. It means you need to approach it with eyes open. The feed isn’t reality. It’s a curated selection optimized for engagement.

When you understand how misinformation spreads, you can resist it. You can verify before sharing. You can recognize manipulation techniques. You can build habits that favor accuracy over emotional satisfaction.

Your choices matter. Every time you pause before sharing, you break the chain. Every time you fact-check something convincing, you protect your network. Every time you admit uncertainty instead of spreading speculation, you model better behavior.

The problem is systemic, but solutions start with individuals making better choices, one post at a time.